As you may already know, Lakehouse is an architecture solution developed by Databricks thanks to the Delta Lake file format and built on top of Data Lake. This new approach to data provides many benefits to data teams, but I’m not going to put the spotlight on them at this time, because I’d like to delve into security topic such as encryption of data at rest.

Lakehouse is GDPR compliant, but in contrast to Azure SQL Database there are no native solutions like Dynamic Data Masking or even Always Encrypted. This means that, if you have enough permissions, you will have the opportunity to read personally identifiable information (PII) as plain text via a simple SQL query.

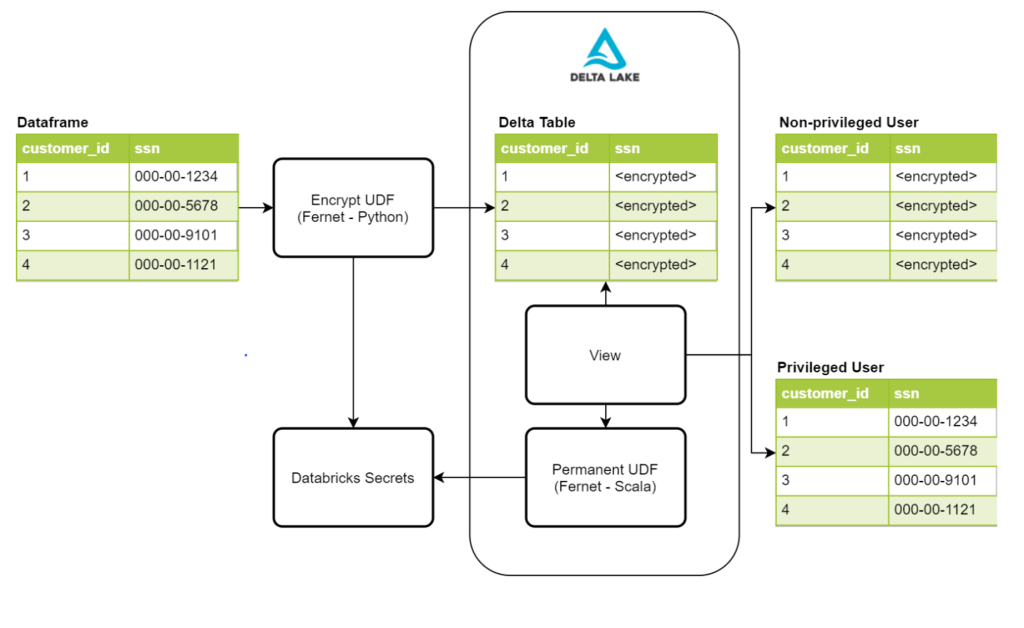

Therefore, the user-defined function (UDF) can make your life easier. You can enable a simple function to apply column-level encryption and reuse it when a sensitive field requires it.

To do this, you just need to import the encryption library and start writing your Python UDF.

This Databricks article is worth reading if you want to learn how to put column-level encryption on your Delta Tables to increase the security level of your data.